Most instructor-led training (ILT) programs end with a feedback form and a scorecard that tells you that participants had a good time. That’s a start, but it’s not impact.

The challenge with ILT is that the most meaningful outcomes don’t happen in the room. They happen back at work, on Monday, when a manager handles a difficult conversation differently, or a new hire applies a process they’ve just been trained on without needing to ask for help. That’s where training either works or it doesn’t, and most L&D teams have very little visibility into what happens after the session or workshop is over.

In this article, we’ll cover all you need to know to start your journey towards assessing and communicating the effectiveness of your instructor-led training programs. Here is what we’ll be looking into:

What is training effectiveness in instructor-led training?

Training effectiveness in ILT means the degree to which a training program produces real, observable change in participants’ knowledge, behavior, and job performance, and whether those changes contribute to meaningful outcomes for the organization.

It’s the difference between a session that felt useful and one that actually was.

Measuring training effectiveness matters for three reasons: first of all, it helps you design better programs. Quite practically, it gives you evidence to justify investment, enabling you to show leadership that L&D isn’t just a cost center. A less obvious point is that measuring training effectiveness actually supports participants in making the changes they want to. Being asked about progress in regular check-ins, for example, is a proven way to encourage participants to keep practicing.

How is measuring ILT effectiveness different from evaluating e-learning programs?

A distinction from e-learning is worth making explicit, because it shapes everything about how you approach measurement.

In self-paced e-learning, there is already data infrastructure: completion rates, quiz scores, time-on-module, and click-through paths. These are imperfect proxies for learning, but they’re trackable by default, built into the platform, and available the moment a learner finishes. In ILT, none of that exists.

What you have instead is a room full of people, a skilled facilitator, and a set of interactions that are fundamentally social, contextual, and unscripted. The learning happens in conversation, in the friction of a role-play, in the moment a participant realizes their assumption was wrong. That’s the particular power of instructor-led training. It’s also why measuring it is harder.

If this makes you wonder how ILT actually works, and what can make it great, we’ve written a whole post about that.

Photo Credit Fabio Riva from the 2026 Facilita gathering in Milan, Italy

The other key difference is transfer. E-learning tends to focus on knowledge acquisition: can the learner recall the information? ILT tends to focus on behavior and application: can the learner do something differently as a result? This is a higher bar, and it pushes the meaningful evidence further out in time.

You won’t see the proof of a well-run ILT session in a quiz score the next day. You’ll see it in how someone runs a meeting three weeks later, or handles a complaint they used to escalate, or explains a process to a colleague without needing to check the manual. Clearly, the measurement approach for ILT has to be designed differently from the start.

This post covers how to do that in the specific context of ILT, a format where the learning is qualitative, relational, and hard to reduce to a single number, which is why it’s so often measured badly, or not at all.

What “effective” actually means

Before getting into how to measure, it’s essential to agree on what you’re measuring for.

Most L&D teams default to measuring reaction: did participants enjoy the session? Did they find it relevant? These are worth capturing, but they tell you almost nothing about what happens next.

The harder and more important questions are whether participants changed their behavior on the job, and whether those behavioral changes contributed to anything the organization cares about: lower turnover, faster onboarding, fewer errors, stronger team performance.

Before designing or delivering anything, ask: Are you paying me to deliver content, or are you paying me to drive change? The answer determines everything that follows.

Chris Taylor, CEO of Actionable

Given that “effective” will stand for different things for different people, two things are important to keep in mind when defining what it means for your program.

First of all, it’s key to clarify that you will have a different definition of effective for each program and session. What’s important is to define this together, and early.

Secondly, remember that effectiveness has many dimensions. This is where using a framework comes in handy: it gives you shared vocabulary with clients, managers, and commissioners. The Kirkpatrick model is the one to know. It’s been the dominant framework in L&D for decades, it’s baked into how most organizations think about training evaluation, and understanding it makes you a more credible conversation partner when impact comes up. Here’s a quick overview.

The Kirkpatrick model: a quick orientation

Donald Kirkpatrick’s four-level model has been the standard framework for training evaluation since the 1950s. It gives practitioners a shared vocabulary for what they’re actually trying to measure.

The four levels are:

- Reaction

Did participants find the training favorable and relevant? Typically measured through end-of-session feedback surveys, satisfaction ratings, and open comments. Easy to collect and worth doing, but limited as a standalone signal. - Learning

Did participants actually acquire the knowledge or skills? In ILT, this might mean a knowledge check at the end of the session, a short quiz, a practical exercise, or a peer debrief. The challenge is that performance in the room doesn’t always predict performance back at work. - Behavior

Are they applying what they learned on the job? This is where ILT measurement gets genuinely difficult. Evidence tends to come from manager observations, self-reported reflection data, peer feedback, or performance reviews (collected weeks or months after the session, not on the day). - Results

Did that application produce outcomes the organization cares about? Think reduced staff turnover, faster onboarding, fewer customer complaints, and improved team satisfaction scores. These are the metrics leadership cares about, and also the hardest to attribute directly to a training program.

A fifth level, ROI, was later added by Jack Phillips, calculating the financial return on training investment as a percentage. It’s worth knowing this extra level exists if you encounter it in conversations with stakeholders. However, applying it rigorously requires a level of pre- and post-data infrastructure that most ILT programs don’t have.

Most programs do Levels 1 and 2 well. Levels 3 and 4 are where things fall apart, and the reason is structural: the evidence for behavior change lives somewhere else entirely. It’s back at work, weeks after the session ended, in a context the trainer has no direct visibility into.

The State of Facilitation 2026 report puts numbers to what most practitioners already sense. Fewer than one in three facilitators has agreed measurable performance indicators with their clients before a program begins. This means that for most programs, impact assessment becomes retrospective and uncertain, constructed after the fact rather than designed in from the start. And when respondents were asked what most gets in the way of lasting impact, 43.5% pointed to the same thing: a lack of follow-up conversations after the session ends.

There is also the attribution problem. The report found that 41% of facilitators identify isolating the specific effect of their work as one of the biggest challenges in impact monitoring. Change rarely has a single cause. Behavior on the job is shaped by management culture, team dynamics, workload, and a dozen other variables that have nothing to do with what happened in the training room. This complexity is real, and it is often used, consciously or not, as a reason not to measure at all.

But complexity doesn’t make impact assessment optional. It just means the tools need to match the territory.

The Institute for Transfer Effectiveness, founded by Melanie Martinelli, has built its practice around this problem, and I really recommend their resources. Their 12 Levers of Transfer Effectiveness framework identifies the factors spanning the learner, the training design, and the organizational environment that determine whether learning actually transfers. For practitioners who want to go deeper into the Kirkpatrick model specifically, they also offer a Kirkpatrick Bronze certification.

Why so few programs measure change well, and how to fix it

Looking at the Kirkpatrick model, we’ve seen how the biggest challenges pertain to levels 3 and 4, where longer-term behavioral or organizational change resides. Most trainers collect feedback after sessions; far fewer go back to check whether anything actually shifted. The pitfalls that produce this measurement gap are predictable, and most have practical fixes.

Avoiding the topic vs co-designing impact

Honestly, avoiding the topic altogether is the most common and most damaging pitfall. If our scoping conversations only cover content, logistics, and learning objectives, there’s nothing to measure against later.

The fix is using the scoping call to agree on observable outcomes before design begins. What should be different after this program? What would stakeholders proudly report in a meeting six months from now? What behavior would show an outsider that the program worked? These questions, drawn from the Institute for Transfer Effectiveness’s discovery process, are worth working through explicitly with your client or commissioning manager. The answers belong in your session documentation from day one.

SessionLab Pages is the natural home for this: scoping notes, learning goals, agreed success indicators, and follow-up responsibilities all in one place, linked directly to the session you’re designing.

As facilitators, we know how to ask good questions. In scoping conversations with clients, though, we often default to logistics first: room setup, timing, lunch breaks. These do matter, and clients tend to be comfortable with such topics. But before you get there, it’s worth pausing to ask: “What does success look like for you? What do you hope will be different as a result of this?”

These aren’t always easy questions to ask. You might be dealing with a manager who needs to tick a box and make sure “the training was delivered.” People are busy. Some would rather not dig into the uncomfortable territory that opens up when you start talking about change.

I saw this recently with a client who deflected my first attempt with a well-timed “Let’s get the logistics out of the way first,” hoping we’d never get back to the deeper questions. I let her lead on the first call, then proposed a face-to-face meeting specifically to discuss the purpose and meaning of the project. It led to some real discoveries, some uncomfortable truths about her role, and (I hope) a shift in how she saw me: from provider of a one-off service to thinking partner.

Sometimes you’ll have to give in and just deliver the training. But it’s usually worth pushing for the deeper conversation first.

“Measuring is too hard” vs proxy indicators

Sometimes what you’re trying to measure can’t be measured directly, either because the data doesn’t exist, because collecting it would be prohibitively expensive, or because the outcome unfolds over time across too many variables to isolate cleanly.

This is where proxy indicators become essential. Let’s see what those are.

Having proxy indicators means agreeing upfront on observable signals that credibly suggest the intended change is happening: for example, fewer escalations between teams, faster onboarding times, higher quality decisions in project reviews, or stronger peer feedback scores. You’re not claiming the training caused these things directly. You’re saying that if the learning transferred, you’d expect to see these signals, and you’re watching for them.

Proxy indicators may not always be precise, but they should be approximately right.

Monitoring and evaluation specialist Ann Murray-Brown

The key is choosing your proxies before the program starts, not retrospectively once you’ve seen the results.

I face this problem concretely in my own facilitation training program. Students work with me across a series of workshops, with about a month between sessions, in which they should have opportunities to practice their new skills. To evaluate their learning, I ask students to fill out self-reflection sheets each practice opportunity, describing what they tried and what happened, and ask school staff to fill out observation sheets.

Neither is high-overhead. Together, they give self-reported data and external observation data, which is the measurement architecture that actually matters, even without a formal framework. The proxy indicators we look for are things like whether students take on facilitation responsibilities independently, whether staff notice different behavior in group settings, especially during weekly meetings. Not perfectly measurable. Approximately right.

Not establishing a baseline vs having data pre-training

If you don’t know where participants started, you can’t show what changed. A short pre-training survey gives you the reference point you need. There’s a secondary benefit too: knowing your audience better informs your design choices.

In this article, you can find a question bank for all your pre- and post-training survey needs. Some questions are designed to help with logistical needs or prepare participants, others can help you establish where the group is at, so you can refer back to those data points in your final reporting.

When working with a group of local administrators on a new regional strategy, for example, I sent them a pre-workshop survey asking for their familiarity with different terms and aspects of the new strategy, on a sliding scale. Asking them the same questions at the end of the workshop, and again a few weeks later, was a quick and easy way to show my client that the sessions had achieved the intended effect.

Collecting data only at the end of the session vs sequential check-ins

Post-training surveys capture how people felt in the room, not what they do on Monday. The fix is building sequential check-ins into the program from the start. Research from Actionable.co identifies three layers that need to happen at different times: self-reported change in the first 20-30 days, observable change validated by peers or managers at 30-60 days, and KPI-aligned signals at 3-9 months.

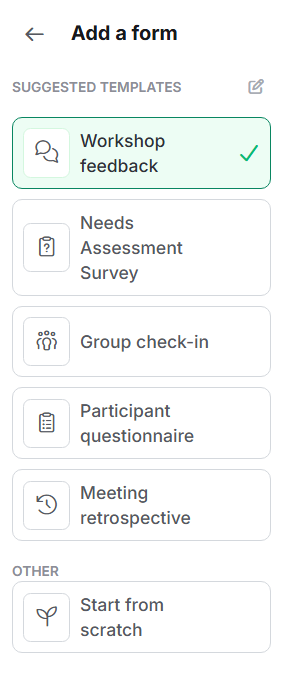

SessionLab tip: SessionLab Forms makes it easy to build these as separate, lightweight surveys all attached to the same session, so the whole data collection arc lives in one place rather than scattered across separate tools.

What to collect and when

With the pitfalls addressed, the practical structure is not that complicated. It’s all about including reflections on impact throughout your entire workflow, instead of tacking some numbers on as an afterthought.

- Before the training, collect a baseline. A short pre-training survey asking participants to self-assess on the specific behaviors or competencies the program is targeting gives you the reference point you need. At the same time, document the agreed proxy indicators in your SessionLab Pages document, alongside your scoping notes and learning goals.

- During the training, it’s essential to capture commitments and decisions. What are participants going to do differently? What did the group agree on? These documented intentions become the anchors for follow-up conversations.

- After the training, the sequential cadence of checking in matters. In the first 20-30 days, collect self-reported reflection data through short check-ins, asking participants how they’re applying what they learned.

This isn’t merely data collection. As Actionable’s research shows, the act of regular reflection correlates directly with the likelihood of behavior change sticking. Participants who check in frequently in the first week after a training are substantially more likely to report meaningful change at 30 days. The follow-up structure is part of the intervention, not separate from it.

- At 30-60 days, look for observable signals: manager observations, peer feedback, movement on your agreed proxy indicators. From there, check in on KPI-aligned outcomes at whatever frequency those metrics are tracked in your organization.

Here at SessionLab, we tested a lightweight version of this after running internal training on AI workflows. Rather than relying on a post-training survey, we kept monitoring impact through async one-to-one check-in questionnaires sent to participants in the weeks that followed, asking about their use of the strategies and tools covered in the sessions. No elaborate system required. The habit of going back, asking, and drawing lessons from the answers is what made the difference.

How SessionLab helps keep training effectiveness measurement organized

Measuring training effectiveness requires a connected workflow: scoping conversations that surface the right outcomes, feedback collected at the right moments, and a clear record of what was actually delivered. SessionLab is designed to support all of this in one place, across the full lifecycle of a program.

- Pages for scoping and outcome definition. Before you design anything, use a Page to document the outcomes you’ve agreed on with your client or commissioning manager, the proxy indicators you’ll be watching for, and who owns follow-up. This sits inside the same session as your agenda, so the goals you set in the scoping conversation stay visible throughout design and delivery, rather than buried in a separate document that gets forgotten.

- Forms for data collection across the full arc. Build your pre-training baseline survey, your post-session feedback form, and your 30- and 60-day follow-up check-ins as separate Forms attached to the same session. You can share each one via a link or QR code, review responses as they come in, and export results or use SessionLab’s AI assistant to summarize key findings. Everything stays connected to the session it belongs to.

For larger teams managing multiple programs, SessionLab’s Business and Enterprise plans add features that make reporting and quality control significantly easier to sustain at scale.

- Standardized templates for consistent delivery at scale. Using SessionLab, your L&D team can maintain a centralized library of session templates, complete with pre-built agendas, Pages for briefing and preparation, and attached feedback Forms. Facilitators create new sessions from these templates rather than designing from scratch, which means quality and structure are consistent across every cohort and every facilitator. You can also define custom default columns in the Session Planner, renaming them to things like “Learning Objectives” or “Facilitator Notes”, so every session in your organization follows the same logic and uses the same language.

- Consistent feedback collection across programs. SessionLab supports standardized Form templates at the workspace level, making it possible to use the same feedback questions across all sessions and compare results across cohorts, facilitators, and programs. Each session has its own instance of the Form, keeping feedback tied to specific deliveries while making patterns visible over time.

- Session completion tracking for reliable delivery records. Once a session is delivered, you can mark it as Closed. This locks the agenda as a confirmed delivery record, closes any attached Forms for new submissions, and logs who facilitated the session and when.

Every closed session automatically appears in the Closed Sessions Dashboard in the Reports section of your workspace, showing total sessions delivered, total time, facilitator, date, and duration, filterable by date range and facilitator.

For L&D teams managing programs across multiple facilitators, this makes it easier to see who is delivering sessions, how often, and how those sessions are performing. This information can then support coaching, quality improvement, and recognition across your facilitation team.

Together, these features support what good ILT measurement actually requires: outcomes defined before design, data collected at the right moments, delivery confirmed and logged, and enough consistency across programs to make comparisons meaningful over time.

How to tell a training impact story

Collecting data is only half the job. The other half is translating it into something leadership can act on.

The gap here is real. As the State of Facilitation 2026 report found, facilitators and the organizations that commission them often speak different languages. In a nutshell, facilitators describe methodologies and processes; decision-makers want to hear about outcomes.

A good training impact story has three components.

- Quantified results

What measurably changed? This doesn’t have to mean revenue figures. It can mean your proxy indicators: the onboarding time that dropped, the escalation rate that fell, the peer feedback score that rose.

- Qualitative evidence

What are participants and their managers saying? The IAF Facilitation Impact Awards framework treats participant quotes as a distinct and essential form of evidence, not decoration but proof. Ask in your follow-up surveys what changed, what participants did differently, and what they would tell a colleague about the program. Then ask permission to share those reflections.

- A clear before/after arc

Set the scene, describe what the situation was before the program, and show what’s different now. This is the structure that makes the impact legible to someone who wasn’t in the room.

Awarded organizations are able to tell a clear and concise story about the impact they wanted to see and how they used facilitation to achieve it.

Julia Donohue and Shalaka Gundi – Facilitation Impact Awards team

For more on building this kind of evidence and storytelling habit into your practice, download SessionLab’s Guide to High-Impact Facilitation. It covers the full arc from scoping to impact report, with practical tips for facilitation impact monitoring and storytelling.

Where to start: three things to do before your next program

The measurement gap in ILT isn’t a technical problem. It’s a habit problem. And like most habits, it’s easier to build if you start small and start early.

If you want to do one thing differently after reading this post, make it one of these.

(1) Have the impact conversation at the scoping stage, not after. Before you finalize any learning objectives, ask your client or commissioning manager what they’d be proud to report six months from now. What would look different? What would people be doing that they aren’t doing today? Having this conversation early means you’re designing toward something measurable from the start.

(2) Choose two or three proxy indicators and document them. You don’t need a comprehensive measurement plan. You need a short list of observable signals that you and your stakeholders agree would indicate the learning transferred. Write them into your session documentation in SessionLab Pages alongside your learning objectives, so they’re visible throughout the design process and easy to reference when it comes time to follow up.

(3) Schedule your follow-up before the session runs. The most common reason impact data never gets collected is that follow-up gets added to a to-do list and never happens. Build at least one check-in into your program calendar before you deliver the training, whether that’s a 30-day survey, a manager observation prompt, or a short async question to participants. Scheduling it in advance is the difference between data you have and data you meant to collect.

None of these require extra budget or a new system. They require intention, and the habit of treating measurement as part of the design, rather than an afterthought.

Ready to go further? Download the SessionLab Guide to High-Impact Facilitation for a complete overview, infographics, and activity sheets covering how to design for outcomes, monitor change, and make the value of your work visible to the people who need to see it.

The post How to measure training effectiveness in instructor-led training first appeared on SessionLab.